How to identify your "Data Quality Savages"

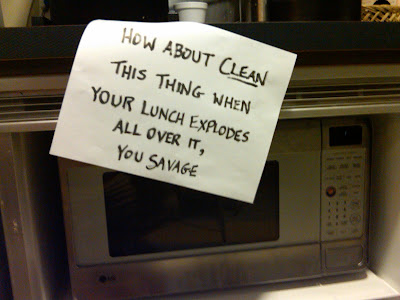

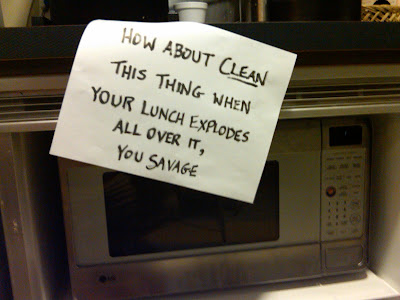

Went downstairs to our company kitchen and found the following inspiration for today's post...

A few weeks ago, I published a post titled "Data Quality Scorecard by client - and beyond...". In the post you'll find some steps on how to build a simple data quality scorecard for your client listing. We use a data quality score here at our shop to identify which client's have significant data quality data issues and "need to be cleaned up". We also use the scorecards to see which clients are benefiting the most from our data quality/governance initiative as well as to "brag" to our senior management to show progress and benefits of our group.

As you well know, some leaders "lead with the carrot" and praise success and try to get their teams to "do better" by giving incentives to folks who do the right thing. On occasion however you might find yourself in a situation where it's time to "lead with the stick". One "lead with the stick" option is to take the data quality scorecard in a different direction by building a scorecard by salesperson or account manager, something like the following:

Talk about incentive. Imagine publishing this to the sales or account teams, or showing it in a team meeting or some other forum. I don't know about you, but I certainly wouldn't want to be "Mr. Bill". Reminds me of one of my favorite quotes: "Oh Lucy! - You Gotta Lotta 'Splainin To Do"

Of course leading with the carrot is a more politically correct and less confrontational method, but if your out of ideas you might want to give this one a try.

Last note: At the DataFlux Ideas show in 2009 I sat in on a presentation where an organization was basing salesperson's commissions and bonuses on their perspective client's data quality errors - stated more clearly, folks who's clients had less problems got more bonus money. Talk about incentive and progressive thinking on their part.

Until next time...Rich

A few weeks ago, I published a post titled "Data Quality Scorecard by client - and beyond...". In the post you'll find some steps on how to build a simple data quality scorecard for your client listing. We use a data quality score here at our shop to identify which client's have significant data quality data issues and "need to be cleaned up". We also use the scorecards to see which clients are benefiting the most from our data quality/governance initiative as well as to "brag" to our senior management to show progress and benefits of our group.

As you well know, some leaders "lead with the carrot" and praise success and try to get their teams to "do better" by giving incentives to folks who do the right thing. On occasion however you might find yourself in a situation where it's time to "lead with the stick". One "lead with the stick" option is to take the data quality scorecard in a different direction by building a scorecard by salesperson or account manager, something like the following:

Talk about incentive. Imagine publishing this to the sales or account teams, or showing it in a team meeting or some other forum. I don't know about you, but I certainly wouldn't want to be "Mr. Bill". Reminds me of one of my favorite quotes: "Oh Lucy! - You Gotta Lotta 'Splainin To Do"

Of course leading with the carrot is a more politically correct and less confrontational method, but if your out of ideas you might want to give this one a try.

Last note: At the DataFlux Ideas show in 2009 I sat in on a presentation where an organization was basing salesperson's commissions and bonuses on their perspective client's data quality errors - stated more clearly, folks who's clients had less problems got more bonus money. Talk about incentive and progressive thinking on their part.

Until next time...Rich

Comments

I ruminated on a similar topic with my blog post about using The Poor Data Quality Jar to penalize the “Data Quality Savages” and most of the commentary I received criticized the “public humiliation” aspect.

In my follow-up post The Scarlet DQ, I asked for better alternatives and many commenters suggested a positive incentive program much like the one you mentioned about commissions and bonuses based on fewer data quality errors.

I am currently reading the excellent book Drive - The Surprising Truth About What Motivates Us by Daniel Pink, where he shares research showing that using positive financial incentives to correct undesirable behavior often paradoxically has the opposite effect. Phil Wright (aka @faropress on Twitter) wrote an excellent blog post Can motivations impact the state of data quality? based on his reading of the book.

Best Regards,

Jim

Granted we have one or two times we fall off the mark, but a consistently low effort by your example helps leadership make "adjustments" to enhance DQ for the masses...

I like this...hmmm, maybe I can recommend this to my customers :)

I recently came across an organisation that engaged in a similar sort of league table which did not fully achieve the desired results. In this case a central team loaded large numbers of data items conforming to a standard template. Field teams then had to deactivate the old data items and schedule the new ones. Unfortunately, no allowance was made for local resource availability and workloads which resulted in some staff feeling under pressure to complete these updates to the exclusion of other data activities. One person was only stopped working through the night on this task when the core system was taken down for maintenance.

The moral of the story is that you need to ensure that you are measuring the right things and that results are treated in an appropriate way. A low score may indicate poor training, other work pressures, other people not providing the correct information etc.